Music has been central to human cultures for tens of thousands of years, but how our brains perceive it has long been shrouded in mystery.

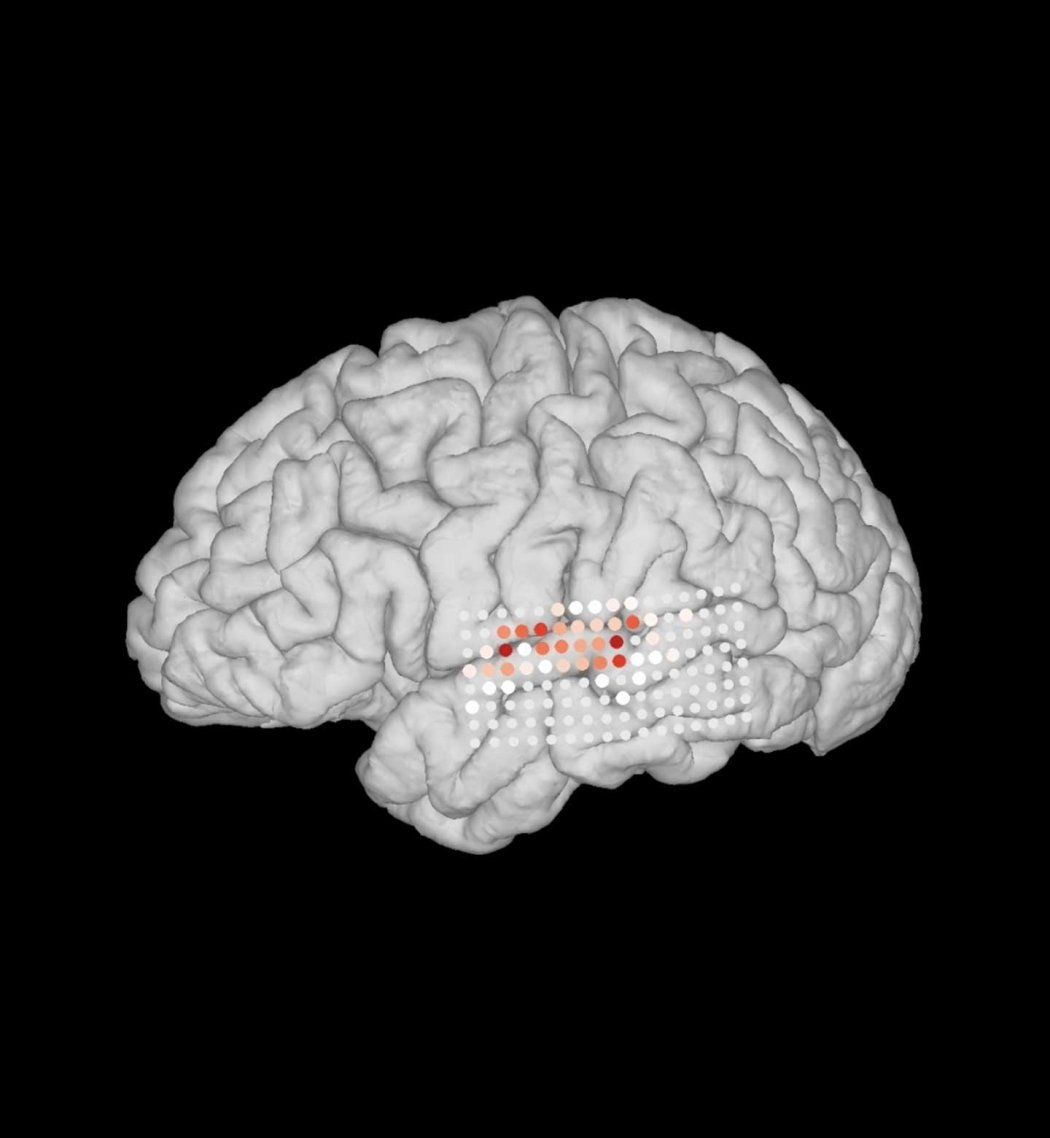

Now, researchers at UC San Francisco have developed a precise map of what is happening in the cerebral cortex when someone hears a melody.

It turns out to be doing two things at once: following the pitch of a note, using two sets of neurons that also follow the pitch of speech, and trying to predict what notes will come next, using a set of neurons that are specific to music.

The study, appearing Feb. 16 in Science Advances, resolves long-standing questions about how melody is processed in the brain’s auditory cortex.

“We found that some of how we understand a melody is entwined with how we understand speech, while other important aspects of music stand alone,” said Edward Chang, MD, chair of neurosurgery and a member of the Weill Institute for Neurosciences at UCSF.

Predicting the next note

The first two groups of neurons turned out to be the same ones that Chang identified in an earlier study of how we process the changes in vocal pitch that lend meaning and emotion to speech.

The third group of neurons, however, are solely devoted to predicting melodic notes and are described here for the first time.

Chang’s team knew that something similar happens in speech: specialized neurons in the auditory cortex anticipate the next speech sound, or phoneme, based on what the brain has already learned about words and their context, much like the word-prediction function of a cell phone.

The researchers hypothesized that a similar group of neurons must exist for predicting melody.

Chang’s team tested this on eight participants who volunteered for research studies during their surgical workup for epilepsy. The team recorded direct brain activity from the auditory cortex while the participants listened to a variety of melodic phrases from Western music.

Then, they listened to sentences spoken in English.

The hypothesis proved correct. The recordings showed that the participants’ brains were using the same neurons to assess the qualities of pitch in both speech and music, but that each of these modes had specific neurons devoted to prediction.

In other words, the auditory cortex wasn’t just looking for notes. It also had a specialized set of neurons that was trying to predict which notes would come next, using what it already knew of melodic patterns.

“When we’re listening to music, two things are happening simultaneously,” Chang explained. “There’s a low-level processing of the individual notes of the melody, and then this high-level, abstract processing of the context of these notes.”

This makes sense because our brains evolved to anticipate upcoming information, said Narayan Sankaran, PhD, a postdoctoral scholar in the Chang Lab, who led the work. Listening to a melody can sway our emotions because the auditory neurons that process music are in conversation with emotional centers in the brain.

“Composers talk about musical tension and resolution,” Sankaran said. “Our ability to expect and anticipate these features of music explains how it can set an upbeat tone or bring us to tears.”

But much remains to be learned about those connections.

“It’s obvious that exposure to music enriches our social, emotional and intellectual lives and has potential to treat a broad range of conditions,” Sankanran said. “To understand why music is able to confer all these benefits, we need to answer some fundamental questions about how music works in the brain.”

Funding: This work was funded by the NIH (grant R01-DC012379) and philanthropy.