A study in patients with epilepsy is helping researchers understand how the brain manages the task of learning a new language while retaining our mother tongue. The study, by neuroscientists at UC San Francisco, sheds light on the age-old question of why it’s so difficult to learn a second language as an adult.

The somewhat surprising results gave the team a window into how the brain navigates the tradeoff between neuroplasticity — the ability to grow new connections between neurons when learning new things — and stability, which allows us to maintain the integrated networks of things we’ve already learned. The findings appear in the Aug. 30 issue of Proceedings of the National Academy of Sciences.

Matt Leonard, PhD, assistant professor in the UCSF Department of Neurological Surgery at the UCSF Weill Institute for Neurosciences. Photo by Susan Merrell

“When learning a new language, our brains are somehow accommodating both of these forces as they’re competing against each other,” said Matt Leonard, PhD, assistant professor of neurological surgery and a member of the UCSF Weill Institute for Neurosciences.

By using electrodes on the surface of the brain to follow high-resolution neural signals, the team found that clusters of neurons scattered throughout the speech cortex appear to fine-tune themselves as a listener gains familiarity with foreign sounds.

“These are our first insights into what’s changing in the brain between first hearing the sounds of a foreign language and being able to recognize them,” said Leonard, who is a principal investigator on the study.

“That in-between stage is a crucial step in language learning but has been difficult to tackle, because the process is dynamic and unique to the individual,” he said. “With this study, we were able to see what’s actually happening in the brain regions involved in differentiating sounds during this initial phase of learning.”

Shift as Foreign Sounds Become Familiar

Learning the sounds of a new language is the first step in learning to use that language, said Leonard. So for this study, Leonard and lead author and postdoctoral scholar Han Yi, PhD, investigated how the activity in the dispersed brain regions associated with language shifted as the listener became more familiar with the foreign sounds.

The team worked with 10 patient volunteers, aged 19 to 59, whose native language is English, and asked them to recognize speech sounds in Mandarin. Mandarin is a tonal language in which the meaning of the word relies not only on the vowel and consonant sounds but also on subtle changes in the pitch of the voice, known as tones. Speakers of non-tonal languages like English often find it very challenging to discern these unfamiliar sounds.

Each of the volunteers had previously had brain surgery, during which electrodes were implanted in their brains to locate the source of their seizures. The study included seven patients at the UCSF Epilepsy Center, and three in the Epilepsy Center at the University of Iowa Hospitals and Clinics. The volunteers agreed to allow Leonard and his team to gather data from high-density, 256-channel electrodes placed on the surface of the brain regions that process speech sounds.

Over the course of the next few days, Leonard and Yi worked with the volunteers individually, playing recordings of several native Mandarin speakers of different ages, both male and female, pronouncing syllables like “ma” and “di” using each of the four tones. After each sound the patient indicated whether they thought the tone was going up, down, up and then down, or staying flat, and received feedback on whether they were correct. Patients repeated this task about 200 times, over several five- to 10-minute sessions.

After that brief amount of time, Leonard said, people had gotten through the initial learning phase and had become somewhat adept at categorizing the sounds.

“We also saw a lot of variability,” he added. “Some people will get a bunch of trials right and then they'll start getting them wrong and then they’ll get it right again in this kind of up-and-down that seems to be part of the learning process.”

Fine-Tuning Neural ‘Knobs’

When Leonard and Yi looked at the neural signals generated by the language learners, they saw a pattern that both surprised them and explained the performance curve they’d observed.

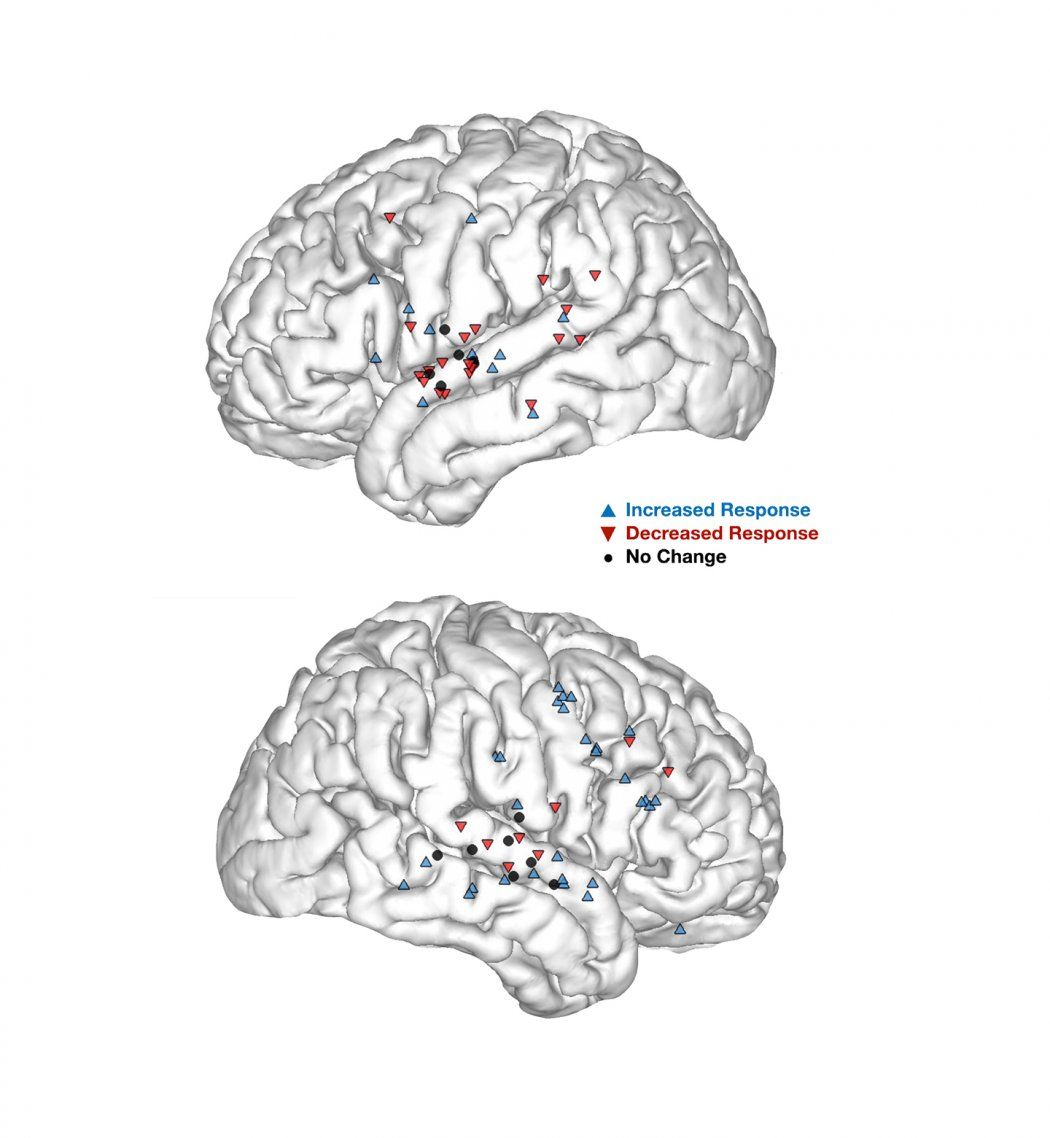

Data from other published studies suggested that activity across the speech cortex might increase as a person becomes more familiar with the language. What the researchers discovered instead was a spectrum of changes distributed throughout that speech cortex; with activity increasing in some areas but decreasing in others, maintaining a careful balance.

Those changes might be related to a brain area becoming tuned in to a particular tone, said Yi.

“We could see some groups of cells would respond more to the falling tone, and just keep ramping up their response, while right next to it another group of cells would increasingly engage when the person heard the dipping tone,” Yi said. “It’s as if these small clumps of neurons took on different roles.”

Matt Leonard, PhD, assistant professor in the UCSF Department of Neurological Surgery at the UCSF Weill Institute for Neurosciences, reviews data from electrocortical recordings made during work with epilepsy patients. Photo by Susan Merrell

In addition, which brain regions were more activated by which tone varied across individuals.

“It’s more like each person’s brain has a unique set of knobs that are getting fine-tuned while they’re becoming familiar with these sounds,” Leonard said.

Leonard and Yi think this may explain why some people pick up the sounds much more easily than others, as each unique brain strikes its own balance between maintaining the stability of the native language while calling on the plasticity required to learn a new one.

“The volunteers were able to learn the tones in Mandarin without affecting their ability to perceive pitch in English or in music,” said Leonard. “These little neural knobs were all communicating with each other to reach the point where they can do the task correctly by working together.”

Co-authors include William L. Schuerman, PhD, and Edward F. Chang, MD, of UCSF. Additional authors can be found in the paper. The work was supported by grants from DARPA (N66001-17-2-4008) and NIH (R01-DC012379, R01-DC0155004), as well as philanthropic foundations.

The University of California, San Francisco (UCSF) is exclusively focused on the health sciences and is dedicated to promoting health worldwide through advanced biomedical research, graduate-level education in the life sciences and health professions, and excellence in patient care. UCSF Health, which serves as UCSF’s primary academic medical center, includes top-ranked specialty hospitals and other clinical programs, and has affiliations throughout the Bay Area.